The Global Open Data Index has changed a lot this year, from our methodology to our software. This has been a community effort. This year we also collaborated with an amazing group of community leaders who helped gather the submissions in different regions as well as an extraordinary group of reviewers who helped make sure all the data we gathered are correct and accurate. At the same time, we aim for the GODI to be a useful tool to advocate for better and more available data.

GODI’s success is based on the effort that our community makes each year. After four years of Index efforts, we want to share some insights into how our country sample changed this year.

Methodological note

To get to the results presented here we took two different approaches to analyzing data from the Index.

- We gathered all the final results of GODI from 2013 on. We removed all the countries that haven’t been assessed in the four years of the Index. We used only the datasets that have been assessed in the four years. We analyzed the URLs provided for each dataset, in order to see if there are changes in the domains and locations of the data. The results of changes presented below come from only analyzing the hostnames over time.

- We did a comparison of submissions from 2015 and 2016 to learn what has changed in the last year in terms of countries and regions that submitted.

Findings

About the sustainability of a volunteer-based Index

This year we came across an issue that we had noticed before but became more obvious with the development of this year’s Index.

Volunteer fatigue

Relying on volunteers from our Network to fulfill the task of submitting can prove challenging from certain regions. This was even more apparent in MENA (The Middle East and Northern Africa), Central Africa, parts of West Africa and Central Asia. For most of the regions where outreach might be complex, we worked with allied organizations who could ensure there were individuals to submit or knew the region enough to be able to find information about certain countries. For MENA, Central Asia and Central Africa finding an ally organization or an individual became a complex task and due to our schedule, we decided to look into it deeper in our next editions.

In a similar thread, 2016 was the first time we didn’t have submissions from countries which had appeared in every previous edition of the Index. Even though there was a work of outreaching to partner organizations or members of the community, we still had a difficult time finding submitters for this year.

This is an interesting issue for us, especially because all of the countries where we didn’t have submissions for the first time are or were part of our Network, whether as chapters or local groups.

We think that there might be several causes to this phenomena:

- Did groups burn out and do not have the capacity to participate in GODI?

- We changed the submission phase timeline this year from August – September to November-January. December is usually a month when participation is low due to the holidays.

- Political events, such as general elections, always take priority over the index, For example, the US elections were a big barrier in finding contributors to GODI from the US.

These changes make us ponder how we can keep the Index being a community effort and still ensure its sustainability and accuracy. We would like to have the community input on that matter. Please add your thoughts on this forum thread

Ranking changes

We wanted this year’s GODI to reflect, as accurately as possible, the state of open data in the places we assessed. For this, we tried to get submissions from people with extensive experience in the corresponding region or country. We see this both as a success for having a more realistic representation of our community network and a challenge for next year, as we will have to double our efforts to ensure we cover more places as we maintain accuracy.

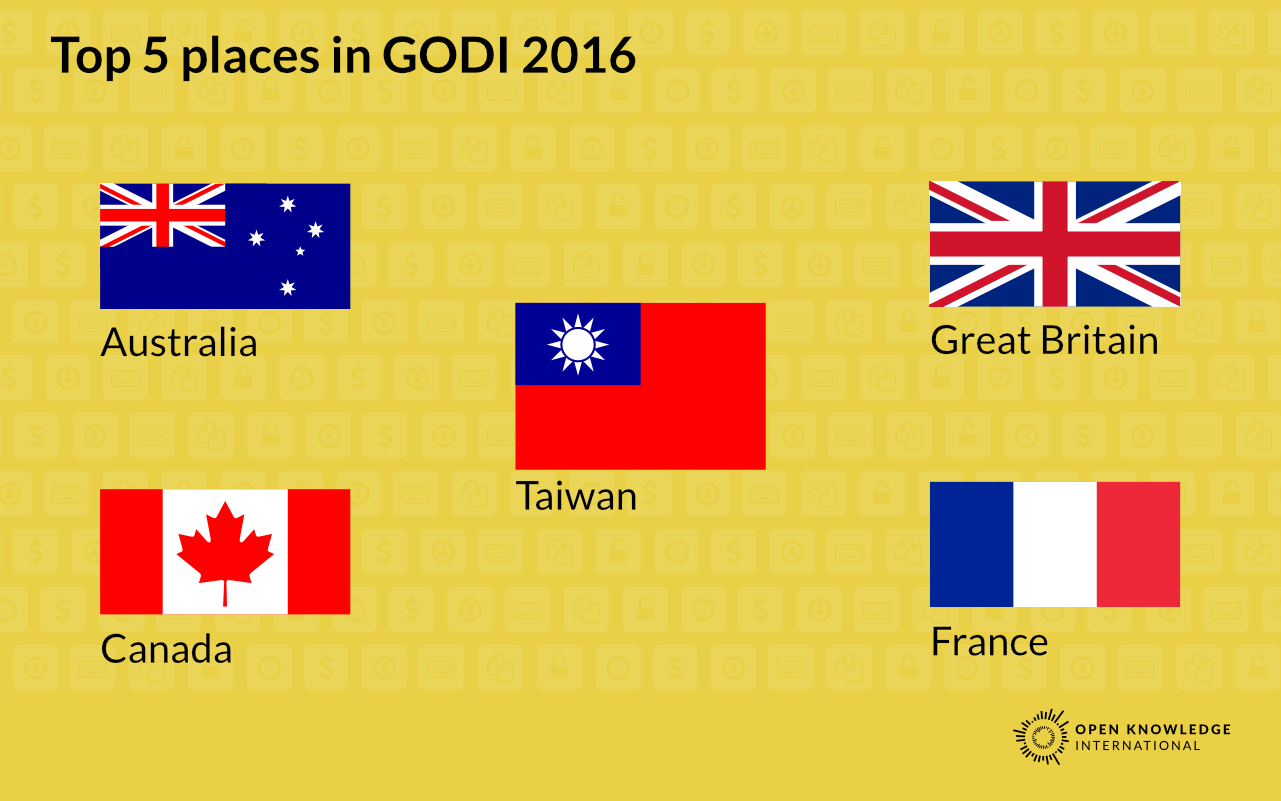

This change in the sample affected the ranking as well. Usually, countries from the Middle East and North Africa (MENA) ranked lower. This has changed and now Caribbean countries are at the bottom. Similarly, since the country sample decreased, some countries could jump higher in the ranking. This increase can be connected to an actual increase in the quality of the data or to the changes in the methodology so we wouldn’t recommend making a comparison of last year’s ranking since the datasets and way of measuring them are different.

Conclusion

Making GODI happen requires engagement from the submitters for each country. This engagement can become exhausting since the effort of finding data and submitting to GODI varies from dataset to dataset. After four years we have more questions than answers about how to make GODI sustainable through the community but we are trying to provide some possible answers to work on that.

Community engagement depends on momentum and relevance in the political moment. For example, this year we started to accept submissions a few days after the US elections and this clearly affected the engagement of volunteers in that country. Something similar happened with South Korea, where the political agenda focused on something more crucial for the country.

In other areas like MENA, Central Asia, and Central Africa we need to expand our network and identify possible partners not only for projects like GODI but to understand what open data means in that context. We also think that this reflects not only to the OKI network, but the greater open data movement, and shows gaps we need to address.

If we consider having more countries is necessary, we need to start engagement earlier in the year and also expand the network of possible submitters to groups who wouldn’t traditionally work with open data, but that might benefit from having access to it.

Also, we have found that some governments really care about the GODI and are open to discuss results and use GODI as a tool for internal advocacy to improve the quality of their data. Having civil society and government as partners in the implementation of GODI since the beginning might be a good experiment for the near future. In addition, having some countries be “champions” of openness in case they have used the GODIas measurement for improvements (e.g. Argentina) might work to get others on board.

Oscar Montiel is the international community coordinator at Open Knowledge Foundation